I remember standing in a high-stakes war room at Amazon, staring at a dashboard that identified $176M in annualized savings. On paper, it was a data scientist’s triumph, a perfect orchestration of predictive analytics and supply chain logic. In reality, it was a ghost story. Despite the math being ironclad, the floor managers weren’t using the tool. They were overriding the algorithms with “gut feelings” and sticky notes. That was the moment I realized that in the enterprise, the most dangerous ghost in the machine isn’t a technical bug, it’s the human instinct to resist what we don’t trust.

We are currently witnessing a massive, multi-billion-dollar arms race in corporate technology. Companies are pouring capital into modern data stack migrations, convinced that the right tech stack will solve their efficiency problems. But there is a massive, unaddressed defect in the assembly line: the human being at the end of the data stream. In my experience leading global operations and data governance at Amazon and ADP, I’ve seen perfectly engineered algorithms fail not because of the code, but because of a psychological rejection by the people expected to use them.

We are essentially building Ferraris for drivers who are too terrified to take the parking brake off. We teach employees to drive an automatic and then give them a car with a manual transmission. Our training, expectations and implementations are misaligned.

The bottom line: The “aversion tax”

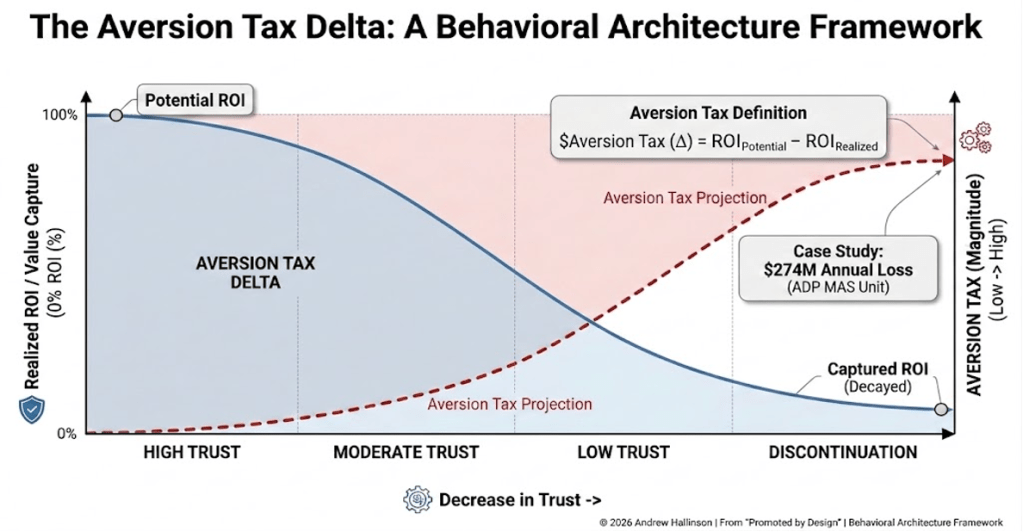

An algorithm that is 99% accurate but only 10% utilized isn’t a breakthrough; it’s a bad investment. In the C-suite, we often treat digital transformation as a technical milestone we can check off. In reality, it is a psychological one. Every dollar you spend on AI is currently subject to an aversion tax; the literal cash value lost to human friction.

I’ve mapped this phenomenon into a framework I call the aversion tax delta. It represents the gap between potential and realized ROI.

Andrew Hallinson

The math is brutal.

If your adoption rate is 10%, your “tax” is effectively 90% of your investment. Finding a $200 million defect is just math; the real work is what I call the “last mile of adoption.” If a senior analyst doesn’t trust the automated recommendation, or if they feel the machine is coming for their job, they will return to the manual “tribal knowledge” they’ve used for a decade. At that moment, your multi-million-dollar investment becomes “shelfware.”

The 3 psychological walls

To fix this, we have to stop acting like IT managers and start acting like behavioral architects. My research into AI adoption highlights three specific reasons I see people subconsciously sabotage ROI:

- The black box paradox: People don’t trust what they can’t explain. If an AI tells a senior leader to change a configuration but won’t explain “why,” that leader will protect their P&L by ignoring the machine. We have prioritized black box efficiency over explainable AI (XAI), and it’s costing us millions in trust.

- Identity threat: We have spent years rewarding employees for their intuition. When a machine automates that intuition or removes the perceived autonomy, it triggers defensiveness. In my work with over 1,400 stakeholders, I’ve found that if you don’t redefine your analysts as strategic partners rather than data processors, they will see the AI as an enemy rather than a tool.

- The perfection trap: Research shows that humans are far less forgiving of a 5% error from a machine than a 20% error from a human peer. We hold AI to a standard of infallibility, and when it stumbles pr hallucinates, we use that one error as an excuse to shut the whole project down.

From theory to 367% velocity: The ADP case study

I put these theories to the test recently while leading the OneData migration at ADP. When I took over the project, we were staring at a January 2027 completion date. The technical hurdles were significant, but the “aversion tax” was higher. I saw a global team that was terrified that a cloud migration meant a loss of control and transparency.

Instead of pushing harder on the tech, I applied behavioral architecture. We shifted the focus from data governance, which feels like a set of restrictive rules, to data democratization, which feels like power. We gave stakeholders ownership through automated quality tools and intuitive interfaces.

The result? We didn’t just meet the deadline; we pulled it forward to May 2026 and are sitting comfortably ahead of schedule. We increased migration velocity by 367% and have already realized $400k in immediate cost avoidance by deprecating legacy systems ahead of schedule. We succeeded because we solved for the person, not just the partition. We closed the digital transformation gap by making the human the owner of the data, not the subject of the machine.

The executive mandate

The next era of leadership won’t be won by the CEO with the biggest data lake or the most advanced LLM. It will be won by the leader who understands the people using them.

If you are a CIO or COO, stop hiring AI experts in a vacuum. Start building an AI-ready culture. Treat your people integration with the same engineering rigor you apply to your Snowflake migration or automation implementation. Investing in AI without solving for the psychology of its adoption is like buying a high-performance engine and failing to put oil in it. It might look good on the showroom floor, but it will eventually seize.

My research for my forthcoming book, “Promoted by Design,” has taught me one thing. You cannot code your way out of a culture problem. Stop looking at the dashboard and start looking at the driver.

The math is simple: Ignoring the human side of AI is a multi-million dollar mistake you can no longer afford to make.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: The ghost in the machine: Why AI ROI dies at the human finish line

Source: News