Quality data is vital for the success of any IT initiative. That’s especially true for AI projects. While bad data will always yield bad results, the stakes are exceptionally high for AI, where poor data could lead to serious financial loss, regulatory fines, and reputational damage. Good data that feeds a successful initiative, however, could deliver a significant and possibly game-changing strategic advantage.

“In the world of AI, it’s garbage-in, garbage-out — squared,” says Satya Jayadev, vice president and CIO at Skyworks Solutions, a maker of semiconductors for wireless networks. “The secret of any good AI system is how well you build your data layers. It’s important to build that architecture and infrastructure — to understand the data source, to generate the data, and to build a single data platform,” Jayadev says.

For Jayadev and others, that means doubling down on a data lake, data warehouse, or data lakehouse implementation as a single source of truth for AI, whether traditional machine learning (ML), generative AI, or agentic AI.

A decade and more ago when big data burst onto the scene, data lakes emerged to accommodate unstructured data as a source of analytic insights. A data lakehouse, sometimes called a query accelerator, contains unstructured data like a data lake, but adds layers of structure — like a data warehouse — to deliver insights faster and more economically.

CIOs are putting these and other data technologies to work to ensure data pipelines are robust and of a level of quality necessary to achieve transformative value from their AI strategies.

Better data = better AI

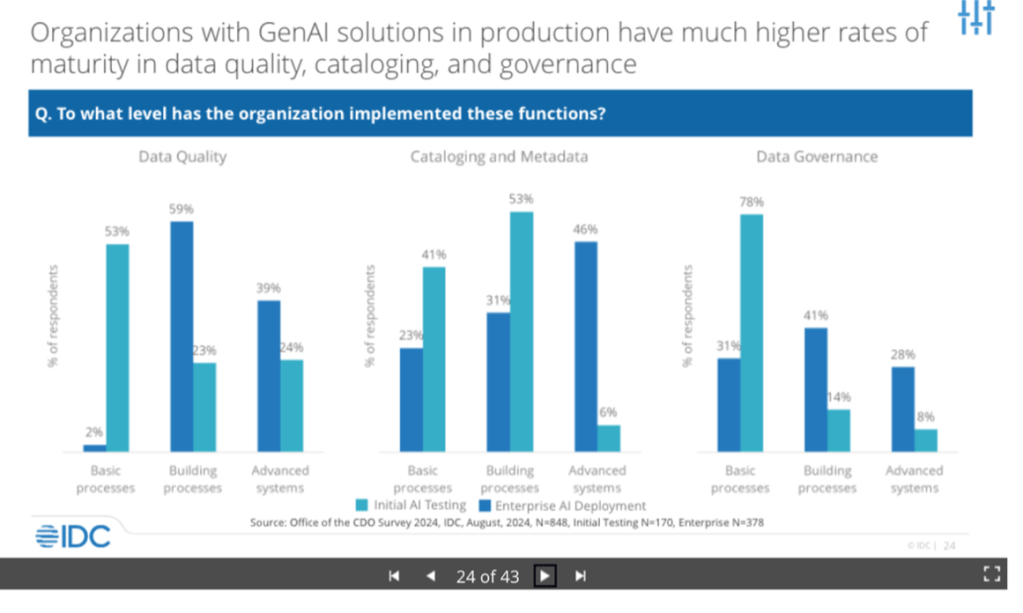

Organizations that have taken steps to better organize their data are more likely to possess data maturity, a key attribute of companies that succeed with AI. Research firm IDC defines data maturity as the use of advanced data quality, cataloging and metadata, and data governance processes. The research firm’s Office of the CDO Survey finds firms with data maturity are far more likely than other organizations to have generative AI solutions in production.

IDC

“Organizations are prioritizing data quality to boost the productivity of data workers and to enhance the accuracy and relevance of AI-generated outcomes,” says Stewart Bond, vice president of IDC’s data intelligence and integration software service.

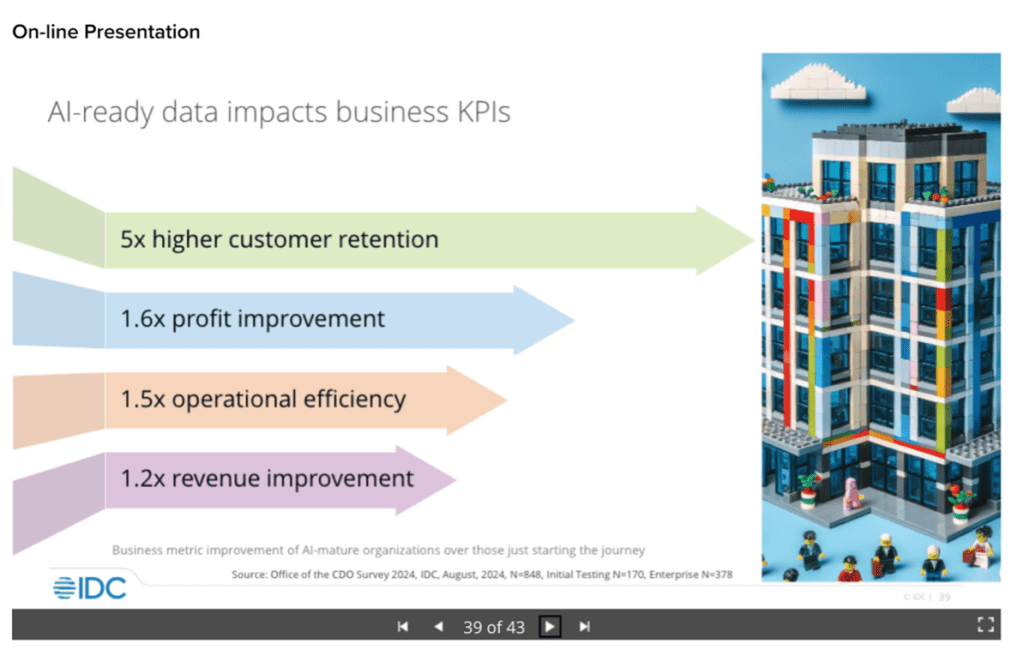

Further, the same IDC research reveals that preparing data to yield optimal AI results has a strong bottom-line effect on the business, delivering a fivefold improvement in customer retention as well as strong gains in profit, efficiency, and revenue.

IDC

For Skyworks Solutions’ Jayadev, a data lakehouse built with Databricks technology is the focus of data quality work.

“The data lakehouse is, in a sense, the foundation of a skyscraper. We collect every piece of data, then sort and group it to build bronze, silver, and gold layers of data quality,” the VP and CIO explains. “We have petabytes of data sitting in the data lakehouse, with terabytes coming in from our factory and other sources.”

Gallo harvests vintage data

Jayadev and Skyworks Solutions are not alone. Gallo, the giant producer of wine and other beverages, has implemented a data warehouse and a data lakehouse from which to glean AI insights, according to CIO Robert Barrios. The company has built an SAP S/4HANA data warehouse that is broken into separate data marts for consumer, finance, and sourcing data. In addition, Gallo has implemented an AWS Redshift data lakehouse for non-SAP data, applying metadata to impart structure.

Gallo is also using generative AI to improve the quality of data by identifying deviances from standard strings and by filling in data gaps, Barrios says. For example, when an attribute of a customer data entry is outside the norm, gen AI can discern the correct attribute and substitute it for the erroneous attribute. The same applies to wine characteristics. For example, a wine might be described as “spicy,” while the accepted term is “peppery.” Because it understands context, generative AI changes the wrong term to the right one.

For gen AI, Gallo is using AWS Bedrock. With Bedrock, Gallo works with its own LLMs rather than public LLMs so that its data is not exposed publicly.

Gallo’s next step is to document how the company makes decisions and then feed that information to AI agents that can make decisions on their own, an implementation of agentic AI. “It’s no different from a sports or real estate agent. You tell the agent what you want and the agent finds it for you,” says Barrios.

Pharmaceutical data finds home in lakehouse

Servier Pharmaceuticals centralized its data in a Google Cloud Platform (GCP) Big Query data lakehouse, which provides a common data platform for six corporate IT portfolios serving groups ranging from R&D to product teams to corporate PR, each of which implements AI to some degree. The lakehouse and its metadata tags deliver the added benefit of breaking down data silos that would otherwise separate the data used by the different teams, according to Mark Yunger, head of IT at Servier Pharmaceuticals, a maker of treatments for cancer and other hard-to-treat diseases.

“We created a rational taxonomy and data nomenclature around all that disparate data so we can use it for AI algorithms, making sure we have good data going in. That helps assure our output is correct,” Yunger says, adding that the AI analytics are particularly beneficial for sales and marketing analysis and insights.

In the pharmaceutical industry, patents are extremely important. That means Servier must diligently protect its own patents while guarding against infringing on other companies’.

“We have to be mindful of what we put into public data sets,” says Yunger. With that caution in mind, Servier has built a private version of ChatGPT on Microsoft Azure to ensure that teams benefit from access to AI tools while protecting proprietary information and maintaining confidentiality. The gen AI implementation is used to speed the creation of internal documents and emails, Yunger says.

In addition, personal data that might crop up in pharmaceutical trials must be treated with the utmost caution to comply with the European Union’s AI Act, which prohibits organizations from actively monitoring an individual without that person’s consent.

The stakes are high. “A lot could go horribly wrong. If you have compliance issues, that can turn into material fines. You have to make sure you are playing by the rules,” says Yunger.

AES draws energy data from the source

AES, a power generation company focusing on sustainable energy, has built CEDAR, a data platform for AI in GCP that aggregates and governs operational data from its clean energy sites, according to Alejandro Reyes, chief digital officer at AES.

“CEDAR creates harmony as to how the data is collected and defined. It makes it the same across our entire product line,” says Reyes. Using both Atlan, a data cataloging tool, and Qualytics, an ML-based data quality tool, CEDAR applies standards to the data so that it can serve as a single source for AI, whether used by finance, engineering, maintenance, or another corporate unit, Reyes explains.

AES’s Farseer, which earned the company a 2024 CIO 100 Award, is an AI-based platform that leverages CEDAR data to give AES an understanding of market demand, anticipated weather conditions, energy capacity, and expected revenue. That information enables AES to determine how much energy to put on the market and how to price it, according to Reyes. In addition, AES is using both Google Gemini and Microsoft Copilot and is exploring agentic AI to handle back-office processes.

Everything rests on the data foundation

While data warehouses, lakes, and lakehouses are far from new, the push to gain business value from AI is shining a bright spotlight on them — one that demands top-notch data governance.

“AI is not traditional IT but a transformational tool — everyone wants access to it. The challenge was to put governance in place so we can open up the data and AI platform for the business to build all its use cases,” says Skyworks Solutions’ Jayadev.

According to Servier’s Yunger, wishing it were true won’t make is so — skilled IT professionals are needed. In the 18 months since he began his data governance project, Yunger says bridging the talent gap is the biggest obstacle he faces. “It’s a combination of talent — capacity and skillset — and process. You need to find the right talent to help drive and accelerate these steps.”

To achieve what he calls “sustainable AI,” AES’s Reyes counsels the need to strike a delicate balance: implementing data governance, but in a way that does not disrupt work patterns. He advises making sure everyone at your company understands that data must be treated as a valuable asset: With the high stakes of AI in play, there is a strong reason it must be accurately cataloged and managed.

Barrios of Gallo reinforces the idea of a single, strong data foundation. “If you have a bunch of different foundations, it could become a house of cards.” But a foundation alone is not enough. Getting the business side of the house on board is vital, asserts Barrios.

“Partner with the business to make sure they have metrics that show how you are doing,” he advises. “You could have the greatest data lakehouse, but people have to use it.”

Read More from This Article: How CIOs are getting data right for AI

Source: News