AI tools designed to assist developers are no longer staying in the background. They are starting to shape what actually gets built and deployed.

They open pull requests.

They modify dependencies.

They generate infrastructure templates.

They interact directly with repositories and CI/CD pipelines.

At some point, this stops being assistance.

It becomes participation.

And participation changes the problem.

When assistance becomes participation

The shift from generative to agentic behavior is the inflection point.

Earlier tools operated inside a tight loop. A developer prompted. The system suggested. The developer reviewed. Nothing moved without human intent.

That boundary is eroding.

Newer systems propose changes, update libraries, remediate vulnerabilities and interact with development pipelines with limited human intervention. They don’t just accelerate developers. They begin to shape the artifacts that move through the software supply chain — code, dependencies, configurations and infrastructure definitions.

That makes them something different.

Not tools.

Participants.

And once something participates in the supply chain, it inherits the same question every other participant does:

How is it governed?

A simple scenario

Consider a common pattern already emerging in many environments.

An AI system identifies a vulnerable dependency.

It opens a pull request updating the library.

A workflow triggers automated tests.

The change is promoted into a staging environment.

Four steps.

No human review.

No explicit governance checkpoint.

Each step is individually valid. Nothing looks wrong in isolation.

But taken together, they create something fundamentally different: A system that can change enterprise software without human intent being re-established at any point. Research from Black Duck found that while 95% of organizations now use AI in their development process, only 24% properly evaluate AI-generated code for security and quality risks.

This is autonomous change propagation across the software supply chain.

The “human-in-the-loop” fallacy

Many organizations rely on a “human-in-the-loop” (HITL) requirement as a safety mechanism for AI-generated code.

At low volumes, this works.

At scale, it breaks.

When an AI system generates dozens of pull requests in a short window, review becomes a throughput problem, not a control. The cognitive load of validating machine-generated logic exceeds what a human can realistically govern.

What remains is not oversight, but a checkpoint.

And checkpoints without effective review are not controls.

The governance gap

Most governance models assume a stable truth: Humans are the primary actors.

Controls tied identity to individuals, approvals to intent and audit trails to accountability.

Even automation systems are treated as extensions of human intent — predictable, bounded and deterministic.

AI systems break that model.

They can generate new logic, act on it and propagate changes across systems. Yet in most environments, they are still governed as if they were static tools.

That mismatch is the gap.

Machine identity is no longer what it was

One way to see this clearly is through identity.

Every interaction an AI system has — repository access, pipeline execution, API calls — requires credentials. In practice, these systems operate as machine identities.

But they are not traditional machine identities.

A service account executes predefined logic. Its behavior is known in advance. Its risk is bounded by what it was configured to do.

An AI-driven system is different. It generates the logic it then executes.

It can propose new code paths, interact with new systems and trigger actions that were not explicitly predefined at the time access was granted.

That is a category change.

Not just a new identity type, but a new attack surface: Identities that can generate the behavior they are authorized to execute.

The World Economic Forum has identified this class of non-human identity as one of the fastest-growing and least-governed security risks in enterprise AI adoption.

Measuring exposure before solving it

Most organizations already track access-related metrics. Those metrics were designed for human-driven systems.

They are no longer sufficient.

If AI systems are participating in the software supply chain, organizations need to measure where and how that participation introduces risk.

A few signals matter immediately:

- AI-generated artifact footprint: What portion of code, dependencies or infrastructure definitions in production originates from AI-assisted processes?

- Authority scope of AI systems: What systems can these identities access — and what actions can they take across repositories and pipelines?

- Autonomous change rate: How often are changes introduced and propagated without explicit human review?

- Cross-system interaction surface: How many systems does a single AI workflow touch as part of normal operation?

- Auditability of AI-driven actions: Can changes be traced cleanly to a system, workflow and triggering context?

These are not abstract concerns. They are measurable.

And until they are measured, they are not governed.

The regulatory imperative

This is not just a technical shift. It is a governance and liability shift.

As regulatory expectations evolve — from AI accountability frameworks to cybersecurity disclosure requirements — organizations are increasingly responsible for explaining and controlling automated decisions inside their environments.

If an AI-driven change introduces a vulnerability or leads to a material incident, “the system generated it” will not be an acceptable answer.

Accountability will still sit with the enterprise.

That raises the bar: Governance must extend to how autonomous systems act, not just how they are accessed.

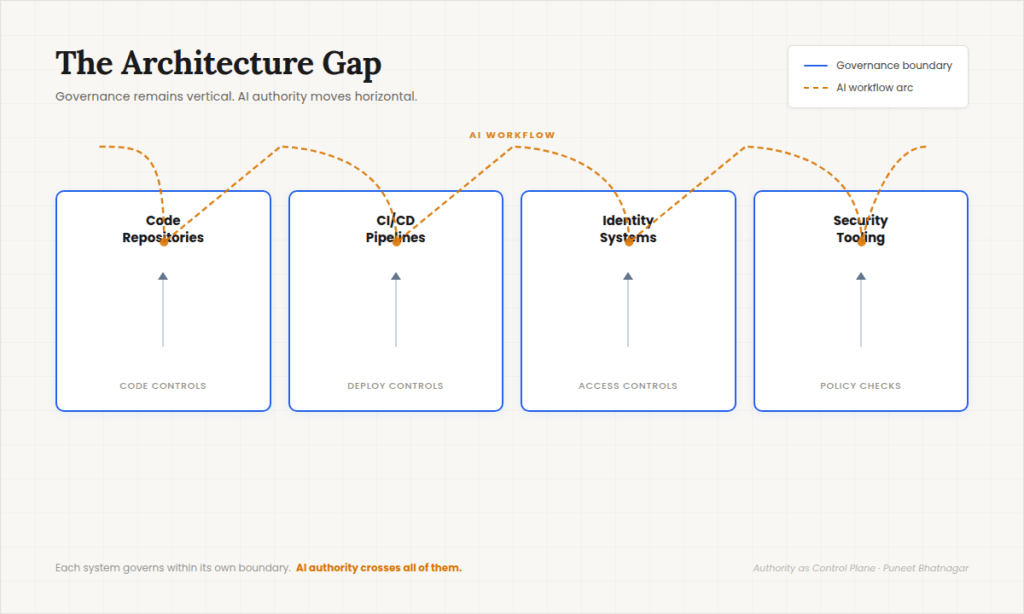

The architecture gap

Puneet Bhatnagar

The issue is not that any one control is missing.

It is that AI systems operate across the seams of systems designed to govern within their own boundaries.

Repositories enforce code controls.

Pipelines enforce deployment controls.

Identity systems enforce access controls.

Security tools enforce policy checks.

Each works as designed.

But AI systems move across all of them.

They read from one system, generate changes, trigger another and influence a third. Authority is exercised across systems, while governance remains within them.

That is the architectural gap.

A different governance model

Most organizations will respond to this shift by trying to extend existing access controls. That instinct is understandable — and insufficient.

The problem is no longer just who or what can access a system. It is how control is maintained when authority can generate new actions dynamically.

This requires a different model of governance.

One that treats software systems as actors whose behavior must be bounded, observed and continuously evaluated across workflows — not just permitted or denied at a point of access. Governance becomes less about static permissions and more about controlling the shape and impact of actions across systems.

That is the shift.

Conclusion

The conversation around AI in software development often focuses on productivity.

But as AI systems begin to participate in producing and modifying enterprise software, the more important question becomes governance.

AI is not just accelerating the software development lifecycle. It is becoming part of the software supply chain itself.

And that changes the problem.

The challenge for CIOs is no longer just managing developers, tools or pipelines. It is understanding and governing the authority that software systems exercise across them.

Because in a world where software can act on behalf of the enterprise, governance is no longer just about access.

It is about authority — what systems are allowed to do, and how that authority is controlled and measured over time.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: When AI writes code, it joins the software supply chain

Source: News