The next wave of AI in software development goes beyond better code generation: agents are starting to take accountability throughout planning, design, build, test, release and operations. In the teams I work with, this is already changing team dynamics, leadership priorities and what CIOs must do to maintain quality, security and control.

The biggest shift I see is genuine delegation: AI can now draft backlog items, inspect codebases, propose implementation paths, create tests, summarize reviews and prep releases before teams fully agree on ‘done.’ This marks a shift from AI as an assistant to AI as an active participant. That is why this topic matters for CIOs right now. With Google I/O on May 19–20 and Microsoft Build on June 2–3, attention will continue to rise around AI coding models, agentic development workflows and the platforms that now span planning through operations. Microsoft and GitHub are embedding agents more deeply into the engineering workflow.

Gemini Code Assist, GitHub Copilot’s coding agent, OpenAI Codex and Claude Code all reflect the same direction: AI is beginning to participate across planning, building, testing, reviewing and operations, not just within the editor. Google is trying to provide coding assistance to broader lifecycle support. Amazon is leaning into operationalization. OpenAI and Anthropic are pushing agentic coding and repository reasoning. Newer prompt-to-app platforms such as Lovable and Replit are compressing the path from idea to working application. The market signal is clear: AI is moving beyond code suggestion and into software delivery itself.

For business and technology executives, the strategic question is no longer whether AI can generate output. It is whether the organization can use AI to improve delivery without creating faster paths to weak requirements, inconsistent standards, poor testing and vague governance. That is why I frame this conversation around software delivery rather than relying too heavily on the older SDLC label. SDLC still makes sense, but it sounds procedural for what is actually happening. Agentic AI is not just accelerating tasks inside a fixed lifecycle. It is rewiring the operating model of delivery. Recent DORA research reinforces what I see in practice: AI tends to amplify an organization’s existing strengths and weaknesses and the biggest returns come not from the tool alone, but from improving the delivery system around it.

Where agentic AI is creating the most value

The first place CIOs should focus on is where agentic AI is creating measurable value across the lifecycle. In planning and requirements, AI can already do meaningful first-pass work. Teams can ask it to inspect an existing codebase, summarize dependencies, suggest implementation paths, draft user stories, refine acceptance criteria and surface tradeoffs before engineers begin building. Used well, that reduces administrative drag and improves consistency. It also changes where the bottleneck appears. What I see most often is that teams adopt agentic tools expecting a boost, but the first real bottleneck appears upstream when acceptance criteria are too loose for the agent to interpret safely. The teams that struggle most are not the ones with weak prompts. They are the ones with vague intent. AI amplifies ambiguity as efficiently as it amplifies insight. OpenAI’s guidance for AI-native engineering teams describes agents contributing to scoping, ticket creation and other lifecycles work well before code is merged.

Vipin Jain

In architecture and design, the real gain is not that AI can produce more diagrams. It can help teams compare options faster, trace dependencies, expose inconsistencies and document decisions with less manual effort. But architecture is not just pattern matching. It is a judgment about resilience, security, compliance, integration, cost and long-term business fit. The strongest teams use AI to explore options while architects define the guardrails, review points and non-functional requirements that the system must adhere to. In an agentic environment, architecture becomes more important, not less, because someone still has to define what the system is allowed to do. What I see in the strongest teams also matches Anthropic’s experience: simpler, well-bounded agent patterns usually outperform elaborate multi-agent complexity when the goal is reliable software delivery.

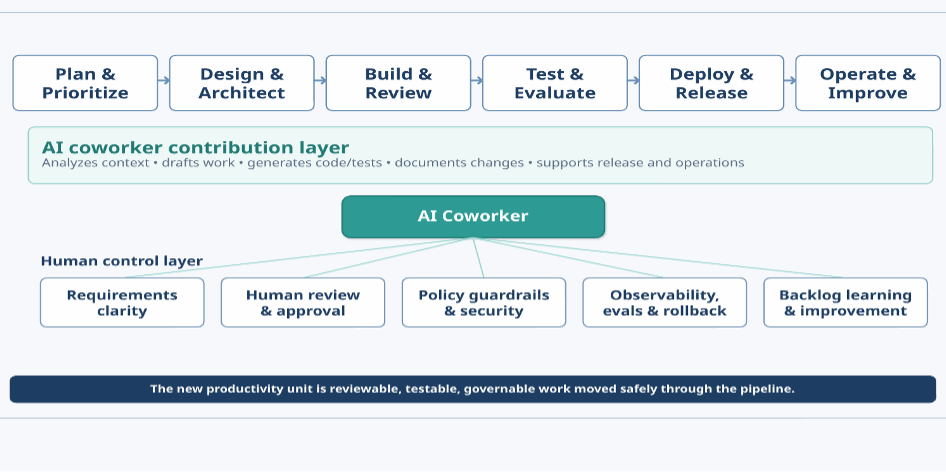

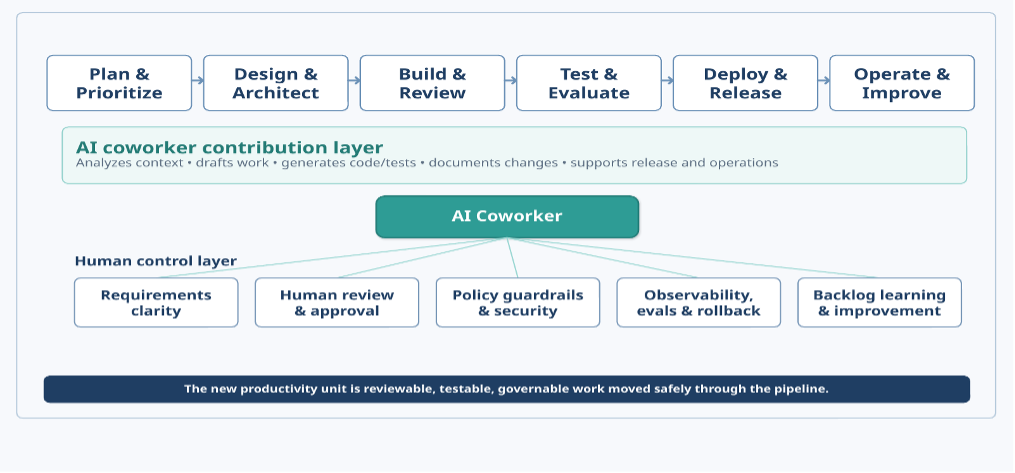

Build, test and review are changing even faster. GitHub Copilot’s coding agent, Claude Code, Amazon Q Developer, OpenAI Codex and Google’s broader agentic tooling all point in the same direction: the market is moving from AI-assisted coding to AI-assisted flow. In practice, that means agents can decompose work, generate code, create tests, run checks, summarize failures and prepare work for human review. The important metric is no longer lines of code per developer. It is the amount of safe, reviewable work the team can move through the pipeline without increasing rework. That is a more executive-relevant measure because it ties AI to throughput and quality rather than just speed. Benchmarks such as SWE-bench matter here because they test models against real repository-level software tasks, rather than isolated code snippets, which is much closer to the work CIOs are actually trying to improve.

Deployment, operations and maintenance are where the enterprise’s stakes become highest. This is the point that many organizations underestimate. Writing code is visible. Governing agent behavior in production is harder, less glamorous and much more important. In the teams I see gaining the most value, leaders are using AI to support release readiness, detect anomalies, summarize incidents, draft remediation steps and improve documentation around recurring issues. I have also seen teams pilot agents successfully in build, then stall at release because no one had clearly defined what the agent could change on its own, what required approval or who owned rollback when something went wrong. The organizations that make progress are the ones that answer those questions early. That is where trust is built. That is also why the market is shifting toward governed runtime and operations support, not just coding help; Amazon Bedrock AgentCore is one example of that broader move toward secure deployment, monitoring and controlled agent operation at scale.

How roles and teams are evolving

Agentic AI changes agile teams by shifting what roles contribute. Developers spend less time on first drafts and more time steering AI, validating diffs, hardening edge cases and managing exceptions. Their leverage shifts from typing speed to judgment—knowing what to trust, challenge or escalate. Leaders should recognize this meaningful change in role identity.

Architects also move up the value chain. In traditional environments, they often spend too much time creating static documentation that teams interpret unevenly. In agentic environments, the more valuable work is defining executable guardrails: approved patterns, tool boundaries, policy controls, integration rules and quality gates that both humans and agents can follow. That makes architecture more operational and more consequential.

QA, platform and SRE teams also gain influence. Testing becomes less about writing every case manually and more about building evaluation strategies, validating behavior, instrumenting pipelines and preserving rollback discipline. The closer AI moves to release and operations, the more essential traceability, observability and control become. Product owners and business analysts also need to raise their game. When requirements are fuzzy, human teams usually compensate through conversation. Agents often execute fuzziness literally. In practice, that means the teams that benefit most from agentic AI are the ones that improve intent, edge-case thinking and acceptance discipline. One more shift deserves attention: pro-code and low-code are converging. Microsoft’s Copilot Studio, IBM WatsonX Orchestrate, Lovable and Replit are lowering the barrier between idea and execution for a broader set of contributors. That is good news for experimentation and business alignment, but it also raises the risk of software sprawl outside shared architecture and security controls. CIOs should not dismiss these tools as toys, nor let them float free of governance. The most effective organizations will connect pro-code and low-code through common guardrails rather than force a false choice between them.

Vipin Jain

What CIOs should do now?

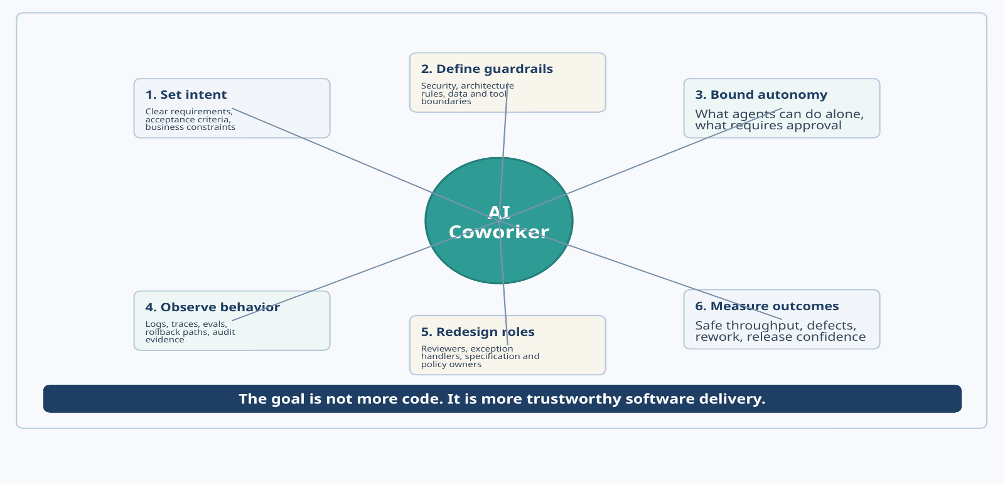

As roles and delivery processes evolve, what concrete actions should CIOs consider now? The organizations I see getting the most from agentic AI are not treating it as a coding-assistant bakeoff. They are redesigning the delivery system around it. That starts with intent. Leaders should raise the quality of requirements before work enters agentic pipelines. If the business outcome, constraints and acceptance criteria are unclear, the AI will often produce technically plausible but strategically wrong work.

Next comes guardrails and autonomy. Leaders should define what agents can do on their own, what requires approval, what systems and data they can touch and what evidence the pipeline must capture. This is not bureaucracy for its own sake. It is the difference between acceleration and avoidable damage. Teams need clear security rules, architecture patterns, approval boundaries and rollback paths before they scale autonomy. Google Research offers a useful counterweight to the hype here: more agents do not automatically produce better outcomes, especially when the task design, coordination model and workflow are weak.

Vipin Jain

Then comes observability. If an agent drafts code, generates tests, touches data, triggers a workflow or influences a release decision, leaders should be able to see that activity, evaluate it and audit it later. This is where many pilots remain weak. They prove that AI can do something. They do not prove that the organization can repeatedly trust it. That is why a more formal evaluation matter. Microsoft’s guidance on agent evaluators is useful here because it focuses on operational signals leaders actually need: task completion, task adherence, intent resolution and tool-call accuracy.

Finally, leaders should change how they measure success. Code volume and demo velocity are weak proxies. Better measures include defect escape, rework, release confidence, cycle time for work that reaches production safely and the percentage of work that moves through the pipeline with clear evidence and human accountability. Start with bounded use cases such as maintenance tasks, test generation, documentation, technical debt reduction and lower-risk feature work with strong review. Build supervision muscle before you try to scale autonomy.

The executive takeaway

The strategic mistake I see most often is treating this moment as a tool refresh or a beauty contest among AI coding platforms. Google, Microsoft, Amazon, OpenAI, Anthropic and the next wave of prompt-to-app players matter because they signal where the market is going. But the winning question for leaders is not which demo looks smartest. It is whether the organization is redesigning software delivery so AI can contribute without weakening quality, security or control.

More generated code is not the prize. Better software delivery is. The enterprises that win will connect business intent to engineering execution more tightly, instrument agent behavior more rigorously and redesign team roles around judgment, supervision and accountability. They will make AI part of the team, not just another tab in the IDE.

This article was made possible by our partnership with the IASA Chief Architect Forum. The CAF’s purpose is to test, challenge and support the art and science of Business Technology Architecture and its evolution over time as well as grow the influence and leadership of chief architects both inside and outside the profession. The CAF is a leadership community of the IASA, the leading non-profit professional association for business technology architects.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: Agentic AI is rewiring the SDLC

Source: News