Boris Cherny, creator of Anthropic’s Claude Code, says he hasn’t written a line of code by hand in months. He shipped 22 pull requests one day, 27 the next, all AI-generated. Company-wide, Anthropic reports that 70 to 90% of its code is now written by AI. CEO Dario Amodei has predicted that AI could handle “most, maybe all” of what software engineers do within months.

And yet Anthropic typically has dozens of software engineering openings, one reportedly carrying $570K in total compensation. As one observer noted, the company is simultaneously predicting the end of the profession and paying top dollar to hire into it.

Meanwhile, during his GTC 2026 keynote, NVIDIA CEO Jensen Huang said that 100% of NVIDIA now uses AI coding tools, including Claude Code, Codex and Cursor, often all three. Then, in a conversation on the All-In Podcast during GTC week, Huang sharpened the point: A $500,000 engineer who doesn’t consume at least $250,000 in AI tokens annually is like “one of our chip designers who says, guess what, I’m just going to use paper and pencil.”

This isn’t cognitive dissonance. It’s a signal. And CIOs who look past the headlines will find a pattern that explains not just where AI coding is going, but where all of enterprise AI is headed.

Tellers, not toll booth workers

The instinct is to see this as an extinction event. AI writes all the code; engineers become toll booth workers, replaced entirely by automation with no complementary role left behind. But the data tells a different story, one I explored in a recent CIO.com article on AGI skepticism.

When ATMs rolled out, bank teller employment didn’t collapse. It doubled, from 268,000 in 1970 to 608,000 in 2006. The machines eliminated the routine transaction. But cheaper branch operations meant banks opened more locations, which created demand for tellers who could handle complex financial conversations. Economists call this Jevons Paradox: When technology makes something more efficient, demand expands rather than contracts.

Software engineers are bank tellers, not toll booth workers. AI agents are eliminating routine implementation: The boilerplate, the CRUD endpoints, the standard test scaffolding. But that efficiency is expanding the total surface area of what “engineering” means. Anthropic isn’t paying $570K for someone to type code. They’re paying for the judgment to orchestrate AI agents that type code: Deciding what to build, evaluating whether the output is correct, governing what gets deployed and maintaining systems that are increasingly written by machines.

Cherny confirmed this shift directly. His team now hires generalists over specialists, because traditional programming specialties are less relevant when AI handles implementation details. The skill premium has moved from writing code to supervising it, from production to orchestration.

The reason AI coding agents work

Here’s the question CIOs should be asking: Why are AI agents succeeding in software development faster than in any other enterprise function?

It’s not because coding models are better than models for customer service, legal review or financial analysis. The underlying LLMs are the same. The difference is that software development already had the infrastructure that every other enterprise function lacks.

Developers didn’t build this infrastructure for AI. They built it for themselves, over decades. But it maps almost perfectly to the six infrastructure gaps that are currently blocking AI agents from moving beyond employee-facing pilots into customer-facing production.

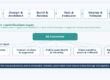

6 gaps the SDLC already solved

1. Governance: Right data, right users, right permissions

In software development, governance is built into the workflow. Branch protection, code review policies and role-based access controls create a clear chain of permission from draft to deploy, whether the author is human or agent.

Most enterprise functions have nothing equivalent. When an AI agent drafts a customer response, accesses a patient record or modifies a financial model, the governance layer (who approved this action, what data was it allowed to see, which policies constrain its output) is either ad hoc or absent. Microsoft’s 2026 Cyber Pulse survey found that while 80% of Fortune 500 companies have deployed AI agents, only 47% have agent-specific security policies in place.

2. Observability: Trace and audit the decision trail

Every line of AI-generated code has a paper trail. Git blame shows who (or what) wrote it. CI/CD pipelines log every build, test and deployment. When something breaks in production, engineers can trace the failure from alert to commit to the specific agent session that produced the change.

Outside of engineering, AI agent decisions are largely opaque. A customer-facing agent that denies a claim or escalates a complaint leaves no audit trail. Without observability, enterprises can’t debug bad outcomes, satisfy regulators or build the trust necessary to expand agent autonomy.

3. Evaluation: Measure correctness at scale

Unit tests, integration tests, type checking, linting and automated QA give software engineering something no other enterprise function has: Continuous, objective measurement of whether AI-generated output is correct. That provides a foundation for proving an agent gets it right.

This is the gap other enterprise functions feel most acutely. DigitalOcean’s 2026 survey of 1,100 technology leaders found that 41% cite reliability as their number one barrier to scaling AI agents. Reliability is an evaluation problem: Without automated, continuous measurement of agent output quality, organizations can’t trust agents enough to put them in front of customers.

4. Memory: Persistent context beyond the context window

Developers take persistent context for granted. Version control, documentation and architectural decision records provide context that survives across sessions, teams and years. An AI coding agent can read the commit history, understand why a design choice was made in 2019, and factor it into today’s implementation.

Most enterprise AI agents operate in a memoryless state. Each customer interaction starts from scratch. Each agent session has no awareness of prior decisions, escalations or context beyond what fits in the context window. This is why employee-facing agents (IT help desks, NOC ticketing) succeed where customer-facing agents stall: Internal users tolerate repeating context. Customers do not.

5. Cost controls: Manage LLM spend across providers

Jensen Huang’s $250K-per-engineer token budget isn’t an abstraction. It’s a real cost management challenge that engineering teams are already navigating. Smart teams route differently depending on the task: Use a lightweight model for boilerplate generation, a reasoning model for architectural decisions and a code-specific model for refactoring. They set token budgets per agent session. They measure cost-per-PR and cost-per-feature, not just cost-per-token.

Enterprises deploying AI agents in other functions rarely have this granularity. When Goldman Sachs stated AI near-zero GDP impact in 2025, the missing variable was cost discipline at the workflow level. Without the ability to route, throttle and measure LLM spend per agent task, scaling agents means scaling costs linearly, which eventually kills ROI.

6. Deployment flexibility: Any cloud, on-prem, no lock-in

In software development, the runtime has always been portable. Code that runs on AWS today can run on Azure tomorrow, or on bare metal in your own data center. Containerization, Kubernetes and infrastructure-as-code tools like Terraform mean that engineering teams can change their minds about where workloads run without rewriting the application. Software has had this mindset for decades.

We’re early enough in this agentic development game that it’s tempting to take short cuts. Organizations that build on a single hyperscaler’s agent framework find themselves locked into that provider’s model ecosystem, observability tooling and pricing structure. As agentic AI matures, deployment flexibility (the ability to run agents on any cloud, on-prem or across hybrid environments without vendor lock-in) will separate organizations that scale from those that stall.

Sometimes you’ll want agents to run close to your data. Other times, you’ll want agents close to the users. And you’ll want your developers to be able to move back and forth between different agent code bases without having to learn a different framework between them.

What CIOs should watch at Build and I/O

Google I/O and Microsoft Build will dominate May with dueling AI coding announcements. The temptation will be to compare model benchmarks. That’s the wrong lens. The models are converging. The real competition is one layer down, in the infrastructure that makes AI agents viable outside of software development.

CIOs watching these conferences should evaluate each announcement against the six gaps: Is Microsoft closing the governance gap with Azure AI Foundry? Is Google advancing observability through Vertex AI? Which platform is making it easier to evaluate agent output at scale, maintain persistent memory across sessions, control costs at the workflow level and deploy without lock-in?

The company that wins the AI coding war will be the one that builds the infrastructure layer that transfers to every other enterprise function. That’s the real stakes of May’s developer conferences, and it’s the real reason CIOs should be paying attention.

The canary’s message

Software engineers are the first knowledge workers to live inside a fully agentic workflow. They’re the canary in the coal mine for every other enterprise function. And right now, the canary is singing, not dying.

The lesson isn’t that AI coding agents have made engineers obsolete. It’s that AI coding agents work because engineers already built the infrastructure that makes agents trustworthy. Governance, observability, evaluation, memory, cost controls and deployment flexibility: These aren’t nice-to-haves. They’re the reason Anthropic can ship 27 AI-generated pull requests in a day and sleep at night.

Every other enterprise function will need to build its own version of that infrastructure before AI agents can move from employee-facing pilots to customer-facing production. The models aren’t the bottleneck. The scaffolding around them is.

Anthropic paying $570K for a software engineer whose job might not exist in a year isn’t a contradiction. It’s Jevons Paradox. And it’s the most expensive leading indicator in enterprise AI.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: The 0K canary: What AI coding agents reveal about enterprise AI’s real gaps

Source: News