When I first started deploying AI systems at scale, I made the same mistake most technology leaders make: I treated security and data architecture as problems to solve after the intelligence layer was built. We moved fast, we shipped models and we celebrated early wins. Then, six months in, we discovered that one of our machine learning pipelines was inadvertently exposing sensitive customer data to downstream systems that had no business accessing it. No breach, no headlines but it was a wake-up call that reshaped how I think about AI architecture entirely.

The truth is, most organizations are building AI the wrong way. They invest heavily in model performance, infrastructure and compute, but treat data governance and security as afterthoughts. In my experience working across industries, this approach creates systems that are technically impressive but fundamentally fragile. Intelligence without integrity is just sophisticated risk.

This article outlines the framework I developed what I now call the Secure Intelligence Framework and how any technology leader can apply it to build AI systems that are both powerful and trustworthy.

Why security must be designed in, not bolted on

The instinct to move fast when deploying AI is understandable. Business pressure is real and AI projects often begin as proofs of concept that quietly grow into production systems before anyone has thought seriously about security.

But this sequencing is dangerous. According to the IBM Cost of a Data Breach Report 2024, the average cost of a data breach reached $4.88 million globally and organizations without AI and automation embedded in their security operations paid significantly more. Poorly architected AI systems expand an organization’s attack surface, creating new vulnerabilities through model APIs, training data pipelines and inference endpoints that traditional security frameworks were never designed to address.

The deeper problem is cultural. When security is treated as a deployment checklist rather than a design principle, teams inevitably cut corners under deadline pressure. I have seen organizations launch production AI systems with no access logging, no output monitoring and no rollback plan because those conversations happened after the build, not before it. By that point, the architecture is already set and retrofitting security is expensive, disruptive and often incomplete.

When I redesigned our AI architecture, I started from a single principle: every layer of the system must assume that every other layer is potentially compromised. This is zero-trust thinking applied to AI and it changes everything about how you design data flows, access controls and model governance. The NIST AI Risk Management Framework offers a strong foundation here it is one of the first documents I share with any team beginning a serious AI deployment.

Sunil Kumar Mudusu

The 3 layers of a secure AI system

The Secure Intelligence Framework is built on three interdependent layers. Each must be addressed independently and then integrated as a whole.

The data layer

This is where most vulnerabilities begin. I have seen organizations connect machine learning models directly to production databases with minimal access controls, reasoning that the model itself is not a user and therefore does not pose a risk. This thinking is wrong and expensive.

Data pipelines must enforce least-privilege access; every component of the AI system should access only the specific data it needs, nothing more. At one organization I worked with, implementing role-based access controls at the pipeline level alone reduced sensitive data exposure by over 60% without any impact on model performance. Equally important is data lineage. You must be able to answer, at any point, exactly what data trained a given model, where it came from and who had access to it. Without lineage, you cannot audit, you cannot comply and you cannot debug when something goes wrong.

The model layer

Once data is governed properly, attention turns to the models themselves. The key risks here are model inversion attacks, where adversaries extract training data from model outputs, and prompt injection in large language model deployments, where malicious inputs manipulate model behavior.

Defending against these threats means treating model endpoints like any other sensitive API authentication, rate limiting, output filtering and adversarial testing built into the deployment pipeline as standard practice. The OWASP Top 10 for Large Language Model Applications is one of the most practical references I have found for model-layer risk it catalogs the exact attack patterns that keep AI security teams up at night. When we deployed an NLP system for internal knowledge management, we added an output review layer that scanned responses for personally identifiable information before returning results to users. It added 40 milliseconds of latency. It was worth every millisecond.

The governance layer

This is the layer most often overlooked because it feels administrative rather than architectural. In reality, governance is what holds the other two layers together over time.

Governance means clear ownership for every model in production, who built it, who maintains it and who is accountable for its outputs. It means model versioning and rollback capabilities. And it means regular audits of both model performance and data access patterns. Microsoft’s Responsible AI Standard and Google’s Model Cards framework are both practical starting points that I have adapted in my own work. Neither is a plug-and-play solution, but both offer structured thinking that can be tailored to almost any organizational context.

What this looks like in practice

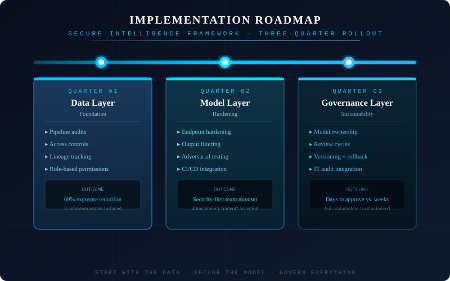

Implementing this framework does not require rebuilding everything at once. I introduced it using a phased approach over three quarters.

In the first quarter, we focused on the data layer auditing pipelines, implementing access controls and establishing lineage tracking. Unglamorous work, but it surfaced three data access issues we had not previously known existed. In two cases, internal teams had been querying datasets they were never authorized to use, simply because no restriction had been put in place.

In the second quarter, we addressed the model layer hardening endpoints, introducing output filtering and embedding adversarial testing into our CI/CD pipeline. The team developed a security-first mindset that made these changes feel natural rather than imposed.

In the third quarter, we formalized governance, assigning model owners, establishing review cycles and integrating model audits into existing IT processes. By year-end, we had a system our security team, legal team and business stakeholders could all trust. New AI projects that previously took weeks to approve were being scoped and greenlit in days because the foundational questions had already been answered at the architecture level.

Sunil Kumar Mudusu

Trust is architected, not assumed

Security and intelligence are not in tension they are complementary. The discipline that makes an AI system secure also makes it more reliable, more auditable and more explainable to the stakeholders who need to trust it.

AI is not a technology problem. It is a trust problem.

If you are building AI systems without a structured approach to data governance and security, you are not moving faster than your competitors. You are accumulating technical debt that will cost far more than the speed ever gained. The organizations that lead in AI over the next decade will not be those that deploy the most models; they will be those that deploy models people can trust.

Start with the data. Secure the model. Govern everything. The rest is execution.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: The secure intelligence framework: Architecting AI systems for a data-driven world

Source: News