Artificial intelligence has become an integral part of modern business operations, driving efficiencies, innovation and growth. As organizations increasingly rely on AI systems, the need for robust governance frameworks has never been greater.

AI governance refers to the policies, processes and structures that guide the development, deployment and oversight of AI technologies within an organization. The challenge lies in balancing the flexibility required for innovation with the oversight necessary to ensure ethical, safe and compliant use. Two central concepts have emerged in this context: controlled autonomy and guarded freedom.

Understanding controlled autonomy

Controlled autonomy in AI governance refers to granting AI systems and their development teams a defined level of independence within clear, pre-established boundaries. The organization sets specific guidelines, standards and checkpoints, allowing AI initiatives to progress without micromanagement but still within a tightly regulated framework. The autonomy is “controlled” in the sense that all activities are subject to oversight, periodic review and strict adherence to organizational policies.

Application in organizations

In practice, controlled autonomy might involve delegated decision-making authority to AI project teams, but with mandatory compliance to risk assessment protocols, ethical guidelines and regulatory requirements. For example, an organization may allow its AI team to choose algorithms and data sources, but require regular reports and audits to ensure transparency and accountability. Automated systems may operate independently, yet their outputs are monitored for biases, errors or security vulnerabilities.

Magesh Kasthuri

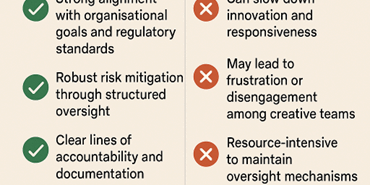

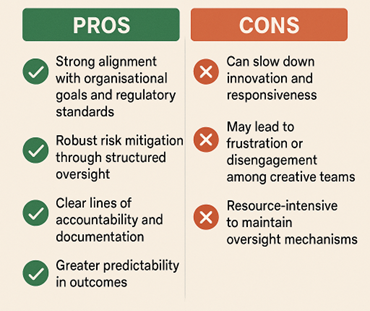

Benefits

- Ensures consistency with organizational objectives and regulatory frameworks

- Reduces the risk of unethical or unsafe AI behaviors

- Facilitates traceability and accountability for AI-driven decisions

- Supports compliance with data privacy and security requirements

Challenges

- Potential for slower innovation due to rigorous oversight

- May lead to bureaucratic delays and reduced agility

- Risk of stifling creative problem-solving among AI teams

- Requires substantial resources for monitoring and enforcement

Exploring guarded freedom

Guarded freedom in AI governance represents a more flexible approach, where AI systems and teams operate with considerable independence, but still within a framework of essential safeguards. The “freedom” is “guarded” by core principles, ethical standards and minimal but effective controls. This model emphasizes trust in the expertise of AI professionals while maintaining a safety net to prevent critical risks.

Application in organizations

Organizations embracing guarded freedom may empower AI teams to explore innovative solutions and rapidly iterate, intervening only at key stages or when certain thresholds are crossed. For instance, a company might permit the use of experimental algorithms without prior approval, provided that all projects undergo a final ethical and compliance review before deployment. The focus is on enabling creativity and adaptability with safeguards in place to catch major issues.

Magesh Kasthuri

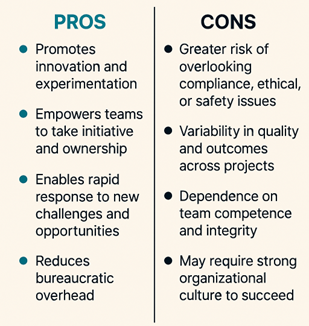

Benefits

- Encourages innovation and rapid development cycles

- Fosters a culture of trust and responsibility among employees

- Allows for quick adaptation to changing market conditions

- Minimizes unnecessary bureaucracy

Challenges

- Increased risk of noncompliance or unintended consequences

- Potential for ethical lapses or security vulnerabilities to go unnoticed

- Difficulty in ensuring consistency across multiple projects

- Relies heavily on the integrity and competence of individuals

Here is the comparative view on controlled autonomy versus guarded freedom on various aspects of governance.

| Aspect | Controlled Autonomy | Guarded Freedom |

| Level of Oversight | High, with regular reviews and strict policies | Moderate, with key safeguards and minimal intervention |

| Decision-Making Power | Delegated, but within defined boundaries | Broad, with responsibility placed on teams |

| Innovation Potential | Moderate, possibly limited by bureaucracy | High, due to greater flexibility |

| Risk Management | Proactive, with frequent risk assessments | Reactive, intervening when issues arise |

| Accountability | Centralized, clear documentation of decisions | Distributed, relies on team integrity |

| Speed of Execution | Slower, due to checks and controls | Faster, with fewer formalities |

| Compliance | High, enforced by policies | Variable, depending on team vigilance |

Which option to choose

Deciding between controlled autonomy and guarded freedom in AI governance largely depends on the nature of the enterprise, its industry and the specific risks involved. Controlled autonomy is best suited for sectors where regulatory compliance and risk mitigation are paramount, such as banking, healthcare or government services.

For example, a financial institution deploying AI for credit scoring would benefit from tightly defined controls, ensuring transparency, audit trails and adherence to strict policies.

Conversely, guarded freedom is more appropriate in innovative environments where agility and rapid experimentation are vital — such as technology start-ups or creative agencies. Here, a software firm developing new AI-powered products may grant its teams more latitude, encouraging novel solutions while maintaining basic ethical guidelines and oversight.

Ultimately, organizations should assess their unique context and goals, choosing a governance approach that safeguards interests without stifling progress or initiative.

Unlocking AI’s potential, but safely

Both controlled autonomy and guarded freedom offer valuable frameworks for AI governance, each with distinct strengths and potential drawbacks. The optimal approach often lies in a tailored blend that aligns organizational culture, risk appetite and strategic objectives.

Business leaders should strive to establish clear policies and safeguards while also nurturing a culture of trust, creativity and responsibility. Regular training, transparent communication and adaptive oversight mechanisms are essential for fostering responsible AI innovation. By thoughtfully balancing autonomy and oversight, organizations can unlock AI’s potential while safeguarding their reputation, stakeholders and society at large.

This article was made possible by our partnership with the IASA Chief Architect Forum. The CAF’s purpose is to test, challenge and support the art and science of Business Technology Architecture and its evolution over time as well as grow the influence and leadership of chief architects both inside and outside the profession. The CAF is a leadership community of the IASA, the leading non-profit professional association for business technology architects.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: AI governance through controlled autonomy and guarded freedom

Source: News