Most organizations today include AI in their strategic roadmaps. These strategies often focus on selecting technologies, defining use cases and executing deployments. Yet many fail to generate sustained impact.

What is often missing is not ambition or capability, but a clear design for how AI should be deployed into real work.

This gap explains a familiar pattern:

- Pilots that never scale

- Tools that generate resistance instead of value

- Automation that erodes judgment

- “AI adoption” initiatives disconnected from daily reality

The problem is rarely the AI itself. It is the absence of deployment design — the deliberate architecture that connects strategic intent with how work is performed. This idea echoes earlier work on augmenting human intellect, which framed technology not as a replacement for human capability, but to extend it.

From AI strategy to AI deployment

Traditional AI strategies tend to focus on capabilities, data and platforms, governance and risk and lists of potential use cases.

These elements are necessary but insufficient, because they explain what AI can do but not how it should be integrated into the organization without distorting how people work, think and decide. Deploying AI is therefore not a simple technical rollout but a design problem. Research on human–AI collaboration, including work published by California Management Review, consistently shows that value emerges when AI systems are designed to complement human judgment rather than replace it.

Different types of work require different forms of AI: Some tasks benefit from direct automation, others demand supervision, some should remain human but supported by cognitive guidance rather than production, and a few should not be touched by AI at all — at least not yet. Applying the same deployment logic everywhere is how AI strategies fail.

What is AI strategy deployment design?

AI strategy deployment design is the discipline that defines how AI should be introduced into work — in what form, at what scale and with what type of human–AI relationship.

Rather than treating AI as a generic capability, it frames it as an intervention into work, with cognitive, cultural and organizational consequences.

The goal is not maximal automation, but the right fit between AI and the nature of the work.

It provides a structured way to translate AI strategy into coherent forms of deployment across an organization.

Instead of starting from technologies or use cases, the framework starts from work itself — how it is performed, by whom, at what scale and with what cognitive and cultural implications.

Its purpose is not to maximize AI usage, but to design the right form of AI intervention for each type of work, ensuring alignment between strategic intent and everyday execution.

The 4 core elements of the deployment design

The framework is built on four foundational elements. Together, they allow organizations to reason systematically about how AI should be deployed, not just where. Importantly, this does not reject a task- or process-level focus; rather, it reframes it. The true scope of deployment is the task as it exists within a specific role, context and way of working — not the task in isolation.

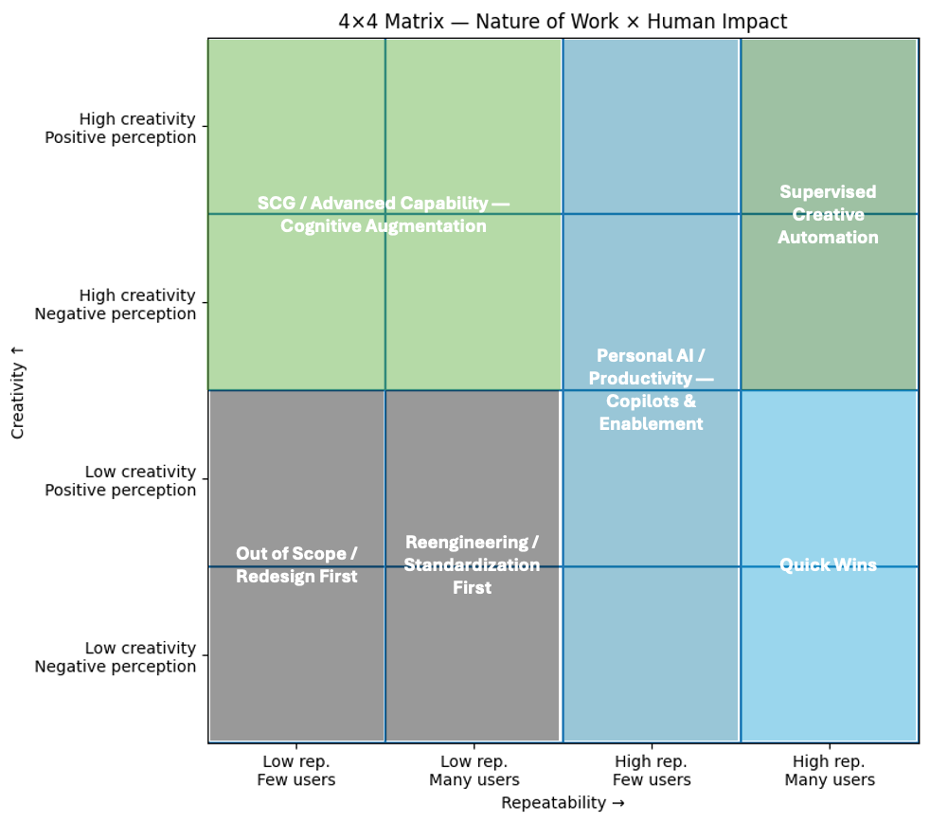

1. Nature of the work (Repeatability × Creativity)

This dimension captures whether work is repetitive or variable, and whether it requires judgment, originality or non-deterministic thinking.

It distinguishes between:

- Mechanical work suitable for automation

- Creative work requiring augmentation or supervision

- Work that should remain primarily human

2. Scale of impact (Users affected)

The same task requires different deployment approaches depending on whether it is performed by a few specialists or across large populations.

Scale determines whether AI should be:

- Personal and flexible

- Standardized and organizational

- Governed through explicit controls

3. Perception of the task (Positive × Negative)

Beyond structural characteristics, the framework explicitly considers how a task is experienced by the people who perform it. Task perception captures whether an activity is generally seen as valuable, meaningful and identity-building, or as burdensome, frustrating and low-value.

This dimension does not determine whether AI can be applied, but strongly influences how it should be introduced. In highly repetitive, low-creativity work, perception mainly affects adoption narratives and change management. In creative or judgment-heavy work, perception often signals whether creativity is authentic or degraded, and whether AI should automate, augment or stay out altogether.

4. Deployment intent

Different interventions pursue different intents:

- Efficiency and cost reduction

- Individual productivity

- Development of advanced capabilities

- Quality, consistency and risk control.

Making deployment intent explicit avoids hidden mismatches between expectations, outcomes and organizational response. It also creates the necessary bridge to a subsequent, more technical decision layer: Once the deployment intent and the nature of the work are clear, organizations can then assess which type of solution is most appropriate — whether AI-based, RPA-driven or a traditional information system — as well as the associated implementation complexity. While this solution-selection step is critical, it sits outside the scope of this article, which deliberately focuses on the deployment design framework itself.

AI strategy deployment design

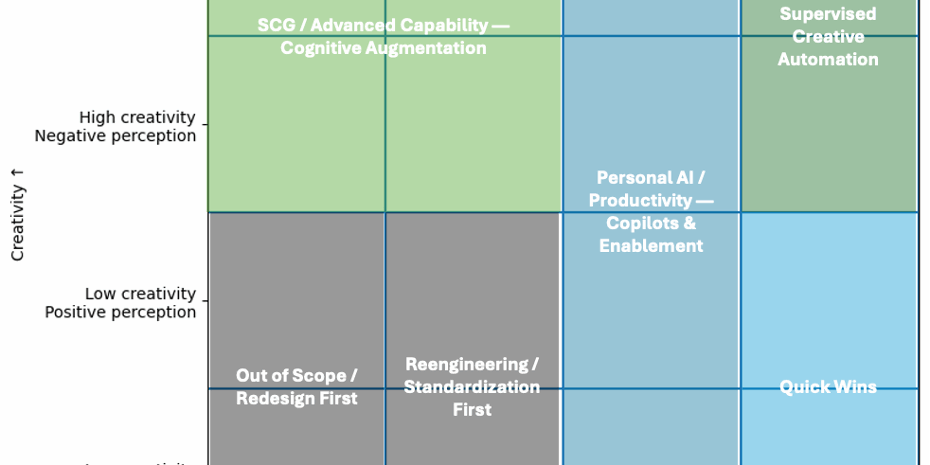

Together, these elements form a structured matrix that maps types of work to appropriate AI deployment patterns.

This matrix is not a prioritization tool, but a design instrument. It visualizes dominant deployment logics rather than cataloguing every possible case.

From this structure, six deployment zones emerge, grouped into five dominant logics.

Raúl García Vega

Based on the matrix, the framework consolidates the space into five dominant deployment zones. These zones are not strict categories, but recurring patterns that describe how AI should be deployed given the nature of work and its human impact.

The detailed 16‑cell grid supports rigor and operational use. For clarity, the article focuses on these five zones, which capture the essential deployment logic.

| Zone | Type of work | Deployment logic | What to do | What to avoid |

| Out of Scope / Redesign First | Low creativity · Low repeatability | Not an AI problem | Eliminate, simplify, redesign | Automating broken work |

| Reengineering and Standardization First | Low creativity · Low repeatability · Scale | Stabilize before AI | Standardize, define rules, clarify processes | Premature automation |

| Quick Wins — Direct Automation | High repeatability · Low creativity · Many users | Efficiency at scale | Automate safely (AI/RPA) | Overengineering |

| Personal AI / Productivity | High creativity · High repeatability · Few users | Individual augmentation | Copilots, flexible tools, enablement | Standardizing outputs |

| SCG / Cognitive Augmentation | High creativity · Low repeatability | Cognitive support | Co-create, review, explore with AI | Replacing human judgment |

| Supervised Creative Automation | High creativity · High repeatability · Many users | Scaled creative systems | Agentic platforms + supervision | Uncontrolled automation |

Conclusion

Beyond frameworks and matrices, the current market context matters. After years of inflated expectations, organizations are increasingly fatigued by abstract AI promises and are shifting toward practical, reusable use cases and plug-and-play solutions that promise fast results.

This shift is understandable — and in some areas effective. However, relying exclusively on standardized solutions overlooks a structural reality: Successful AI deployment depends less on technology and more on understanding how work is actually performed.

Jobs are not defined by a single type of task or a single deployment zone. In practice, most roles combine activities that span multiple zones of the framework. This mix — rather than any individual task — determines how AI should be introduced, governed and scaled within an organization. Treating roles as monolithic leads to oversimplification and unrealistic expectations.

For this reason, managing expectations is as important as selecting technology. In most cases, AI deployment will continue to require human intervention, supervision and judgment by design. Not everything is, or should be, fully automatable.

Ultimately, the AI strategy deployment design framework shifts the conversation away from where to use AI toward a more durable question: What type of human–AI relationship makes sense for each form of work, and where human judgment must remain by design.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Read More from This Article: Why most AI strategies fail and how to design one that actually sticks

Source: News